Spacetime ‘Branes: The Multiverse

Friday, December 2nd, 2011by David Darling

Today I have the pleasure of hosting my friend David Darling, an astronomer and well-known science writer, who will update us on the multiverse. Dr. Darling has written many books of popular science, including Life Everywhere: The Maverick Science of Astrobiology (in which he mentions my views on Rare Earth and the Anthropic Principle). He also maintains a much-visited website, The Worlds of David Darling that contains The Internet Encyclopedia of Science. His latest book, Megacatastrophes!: Nine Strange Ways the World Could End, his second collaboration with Dirk Schulze-Makuch, will appear next spring.

Today I have the pleasure of hosting my friend David Darling, an astronomer and well-known science writer, who will update us on the multiverse. Dr. Darling has written many books of popular science, including Life Everywhere: The Maverick Science of Astrobiology (in which he mentions my views on Rare Earth and the Anthropic Principle). He also maintains a much-visited website, The Worlds of David Darling that contains The Internet Encyclopedia of Science. His latest book, Megacatastrophes!: Nine Strange Ways the World Could End, his second collaboration with Dirk Schulze-Makuch, will appear next spring.

The multiverse, or theory of many universes, is very much in the news right now because some recent work strongly suggests that it might be true. The basic, mind-boggling idea is that “out there” is more than just the bubble of space-time we happen to live in – what we call the Universe. There are trillions and trillions (and trillions and trillions…) of other universes. Don’t even bother trying to imagine them all or your head might explode.

Surprisingly, the word “multiverse” has been around for a long time. It was coined way back in 1895 by the American philosopher William James, although he probably had something quite different in mind than what modern scientists are talking about.

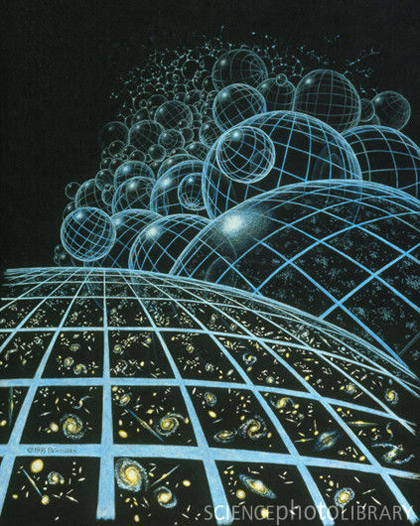

And what are they talking about? Here’s the first problem we run into in tackling the multiverse concept. When scientists talk about the multiverse they can mean different things. To a cosmologist – someone interested in the origins and evolution of the universe as a whole – the multiverse is a consequence of the nature of the vacuum, which isn’t as empty as we usually suppose. The cosmologist’s multiverse stems from something called chaotic inflationary theory, which itself is a variety of the theory of cosmic inflation. In a nutshell, our universe is like a bubble of spacetime that spawned from a great foaming ocean of spacetime that’s always existed and always will exist. In it’s first few moments, our universe expanded at a fantastic rate before settling down to a more sedate rate of growth. But beyond our universe are other, similar bubbles – other universes – each expanding and each with their own physical constants and laws. One estimate puts the number of such universes at an outrageous 10 to the power 10 to the power 10 million (in other words 1 followed by 10 to the 10 million zeros – aargh!).

On the other hand, to a quantum physicist – someone who deals with the very smallest things in nature – the concept of the multiverse is a different beast. If you believe in something called Everett’s many-worlds interpretation of quantum mechanics, which a lot of quantum scientists do, every time an observation is made at the quantum (super-tiny) level, the universe splits into all the possible outcomes that could happen. I’m not even going to get into what counts as an “observation”! Some people say it has to involve a conscious or sentient observer (like a human being); others argue that any measuring instrument will do. It’s complicated. But the underlying message of Everett’s theory is that any time an event (such as a collision between particles) is watched, the universe splits in various ways to take account of all the possible outcomes. Needless to say, this gets pretty crazy pretty fast! If every outcome of every minuscule watched event gives rise to an entirely new universe, then the total number of universes in this quantum physical view of the multiverse is beyond mind-boggling.

So there are these two different multiverse scenarios – the one of the cosmologist and the one of the quantum physicist. And they aren’t mutually exclusive. They could both be right. Trying to figure out the consequences if both types of multiverse are real and co-existent very quickly overwhelms my brain’s paltry (and diminishing) collection of neurons. But let’s just focus on a couple of particulars. In the quantum physicist’s multiverse, there are bound to be a lot of universes that are very similar to the one we live in. In fact there are going to be a lot of universes with other you’s – some of them only very slightly different from the one that we’re in right now. The cosmologist’s multiverse also allows for a vast number of universes, but the chances of almost exact copies of you is more remote. Instead, the cosmologist’s multiverse is populated by an incredible variety of bubbles of space-time in which the laws and basic constants are expected to vary widely. Probably very few are capable of supporting life.

Another distinction between the two types of multiverse is their fundamental nature. The cosmologist’s multiverse is a bit easier to grasp. Put it this way, if there were a parallel you in a bubble-universe that was the product of chaotic inflation then this other you would exist in the familiar three dimensions of space. But an alternative you in Everett’s many-worlds picture is a much more esoteric affair: a creature living in a different quantum branch of something called Hilbert space. Not being a mathematician, I won’t try to explain what Hilbert space is (Google it, if you’re interested). Suffice it to say, it’s an extremely important concept in quantum mechanics – but far from easy to visualize.

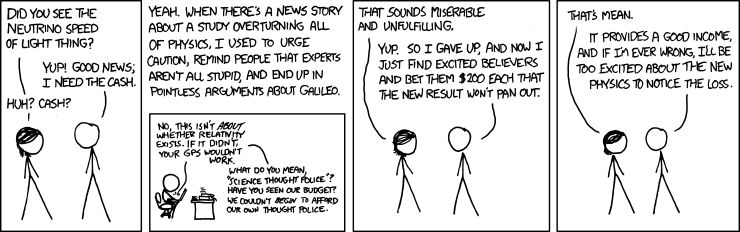

Now the exciting thing is, physicists are getting close to being able to test if the multiverse is real. If there are other universes beyond our own, then it’s likely we may have bumped into them in the past, resulting in the cosmic equivalent of fender benders. The impacts ought to show up as dents in the cosmic microwave background – the now much-cooled afterglow of the Big Bang. For some time, the European Space Agency’s Planck satellite has been mapping the microwave background to an unprecedented level of precision. The results will be out soon and may confirm the multiverse theory.

On a different front, theorists have made a discovery that goes to the very heart of quantum mechanics. They’ve shown its very likely that something called the wavefunction – the most important concept in the physics of the very small – isn’t a mere wave of probability as previously supposed, but a real physical object. The most far-reaching conclusion of this is that Everett’s many-worlds interpretation is correct and the quantum physicist’s multiverse is also a fact.

So get ready for expanding horizons. Just a few centuries ago, people thought there was only one sun. Then it turned out the stars were suns too. Then we discovered that our Milky Way Galaxy, with its hundreds of billions of stars, was just one among many galaxies. Then it turned out that galaxies were arranged in clusters, which in turned formed superclusters. Now it seems our universe is just one of an unbelievable number of other universes. Who’s to say the hierarchy doesn’t extend beyond the multiverse?

Images: David Darling, Life Everywhere; bubble universes, Sally Bensusen/SciencePhotoLibrary.